Chinese Company: DeepSeek AI is a Chinese company, which raises concerns for some customers about information privateness and potential authorities access to data. Data privacy and safety dangers associated with AI-driven data assortment. That type of launch permits finish customers to simply wonderful-tune those mannequin parameters with additional training information for more targeted purposes. A fully open supply launch, together with training code, can provide researchers more visibility into how a model works at a core stage, potentially revealing biases or limitations which are inherent to the mannequin's structure instead of its parameter weights. Beyond self-rewarding, we are also dedicated to uncovering other basic and scalable rewarding methods to persistently advance the mannequin capabilities in general scenarios. Methods resembling grouped-question attention exploit the possibility of the identical overlap, but they accomplish that ineffectively by forcing attention heads that are grouped collectively to all respond equally to queries. It is because cache reads usually are not Free DeepSeek v3: we'd like to save lots of all these vectors in GPU excessive-bandwidth reminiscence (HBM) after which load them into the tensor cores when we need to contain them in a computation.

Chinese Company: DeepSeek AI is a Chinese company, which raises concerns for some customers about information privateness and potential authorities access to data. Data privacy and safety dangers associated with AI-driven data assortment. That type of launch permits finish customers to simply wonderful-tune those mannequin parameters with additional training information for more targeted purposes. A fully open supply launch, together with training code, can provide researchers more visibility into how a model works at a core stage, potentially revealing biases or limitations which are inherent to the mannequin's structure instead of its parameter weights. Beyond self-rewarding, we are also dedicated to uncovering other basic and scalable rewarding methods to persistently advance the mannequin capabilities in general scenarios. Methods resembling grouped-question attention exploit the possibility of the identical overlap, but they accomplish that ineffectively by forcing attention heads that are grouped collectively to all respond equally to queries. It is because cache reads usually are not Free DeepSeek v3: we'd like to save lots of all these vectors in GPU excessive-bandwidth reminiscence (HBM) after which load them into the tensor cores when we need to contain them in a computation.

For example, GPT-three had 96 attention heads with 128 dimensions each and 96 blocks, so for every token we’d want a KV cache of 2.36M parameters, or 4.7 MB at a precision of 2 bytes per KV cache parameter. Low-rank compression, however, allows the identical information to be used in very different ways by different heads. This causes gradient descent optimization strategies to behave poorly in MoE coaching, often leading to "routing collapse", where the mannequin will get caught all the time activating the identical few experts for each token instead of spreading its information and computation round the entire out there specialists. It will imply these specialists will get almost all the gradient alerts during updates and grow to be better whereas other consultants lag behind, and so the other consultants will continue not being picked, producing a constructive feedback loop that leads to different experts never getting chosen or educated. In this subject, I’ll cover a few of the important architectural improvements that DeepSeek highlight in their report and designs-tab-Open why we should count on them to lead to better efficiency compared to a vanilla Transformer. When you see the method, it’s immediately obvious that it cannot be any worse than grouped-query consideration and it’s additionally more likely to be considerably better.

For example, GPT-three had 96 attention heads with 128 dimensions each and 96 blocks, so for every token we’d want a KV cache of 2.36M parameters, or 4.7 MB at a precision of 2 bytes per KV cache parameter. Low-rank compression, however, allows the identical information to be used in very different ways by different heads. This causes gradient descent optimization strategies to behave poorly in MoE coaching, often leading to "routing collapse", where the mannequin will get caught all the time activating the identical few experts for each token instead of spreading its information and computation round the entire out there specialists. It will imply these specialists will get almost all the gradient alerts during updates and grow to be better whereas other consultants lag behind, and so the other consultants will continue not being picked, producing a constructive feedback loop that leads to different experts never getting chosen or educated. In this subject, I’ll cover a few of the important architectural improvements that DeepSeek highlight in their report and designs-tab-Open why we should count on them to lead to better efficiency compared to a vanilla Transformer. When you see the method, it’s immediately obvious that it cannot be any worse than grouped-query consideration and it’s additionally more likely to be considerably better.

In fashions equivalent to Llama 3.Three 70B and Mistral Large 2, grouped-question attention reduces the KV cache measurement by around an order of magnitude. This rough calculation reveals why it’s essential to seek out methods to cut back the scale of the KV cache when we’re working with context lengths of 100K or above. When a Transformer is used to generate tokens sequentially during inference, it must see the context of all the past tokens when deciding which token to output subsequent. If every token needs to know all of its past context, this means for each token we generate we must read your entire past KV cache from HBM. To get an intuition for routing collapse, consider trying to prepare a model equivalent to GPT-four with sixteen consultants in complete and a couple of experts lively per token. Naively, this shouldn’t fix our downside, as a result of we would have to recompute the precise keys and values each time we have to generate a new token.

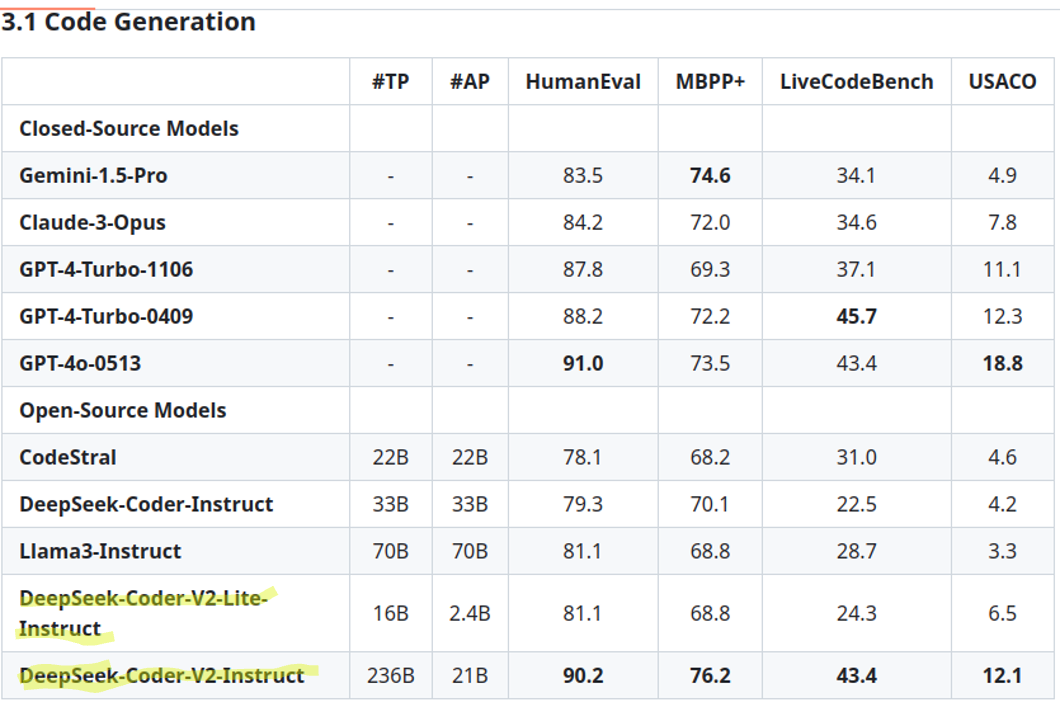

In idea, this could even have helpful regularizing results on training, and DeepSeek experiences discovering such results in their technical stories. Other international locations, together with the United States, have stated they may seek to dam DeepSeek from authorities employees’ mobile devices, in line with media studies. Meaning an organization primarily based in Singapore might order chips from Nvidia, with their billing deal with marked as such, however have them delivered to another country. It is nontrivial to address these coaching difficulties. Compared with DeepSeek v3 67B, DeepSeek-V2 achieves stronger performance, and meanwhile saves 42.5% of coaching costs, reduces the KV cache by 93.3%, and boosts the maximum era throughput to more than 5 times. On Codeforces, OpenAI o1-1217 leads with 96.6%, while DeepSeek-R1 achieves 96.3%. This benchmark evaluates coding and algorithmic reasoning capabilities. It has been recognized for attaining efficiency comparable to main fashions from OpenAI and Anthropic while requiring fewer computational resources. DeepSeek vs. Closed-Source Giants: While companies like OpenAI and Google maintain their fashions privately, DeepSeek’s method fosters neighborhood-driven enchancment, doubtlessly outpacing their scope of innovation. Note: It's necessary to notice that while these models are highly effective, they will generally hallucinate or provide incorrect data, necessitating cautious verification.