We update our DEEPSEEK to USD worth in actual-time. Multi-head Latent Attention (MLA) is a brand new consideration variant launched by the DeepSeek staff to enhance inference effectivity. Benchmark outcomes present that SGLang v0.Three with MLA optimizations achieves 3x to 7x greater throughput than the baseline system. The DeepSeek MLA optimizations were contributed by Ke Bao and Yineng Zhang. The LLaVA-OneVision contributions had been made by Kaichen Zhang and Bo Li. LLaVA-OneVision is the first open mannequin to realize state-of-the-art performance in three vital computer imaginative and prescient scenarios: single-picture, multi-image, and video duties. You can launch a server and question it using the OpenAI-compatible vision API, which helps interleaved text, multi-picture, and video formats. This is actually a stack of decoder-solely transformer blocks utilizing RMSNorm, Group Query Attention, some type of Gated Linear Unit and Rotary Positional Embeddings. With these modifications, I inserted the agent embeddings into the database. These GPUs are interconnected utilizing a mix of NVLink and NVSwitch technologies, making certain environment friendly data transfer within nodes. In the A100 cluster, every node is configured with eight GPUs, interconnected in pairs using NVLink bridges. I don’t get "interconnected in pairs." An SXM A100 node ought to have eight GPUs linked all-to-all over an NVSwitch.

We update our DEEPSEEK to USD worth in actual-time. Multi-head Latent Attention (MLA) is a brand new consideration variant launched by the DeepSeek staff to enhance inference effectivity. Benchmark outcomes present that SGLang v0.Three with MLA optimizations achieves 3x to 7x greater throughput than the baseline system. The DeepSeek MLA optimizations were contributed by Ke Bao and Yineng Zhang. The LLaVA-OneVision contributions had been made by Kaichen Zhang and Bo Li. LLaVA-OneVision is the first open mannequin to realize state-of-the-art performance in three vital computer imaginative and prescient scenarios: single-picture, multi-image, and video duties. You can launch a server and question it using the OpenAI-compatible vision API, which helps interleaved text, multi-picture, and video formats. This is actually a stack of decoder-solely transformer blocks utilizing RMSNorm, Group Query Attention, some type of Gated Linear Unit and Rotary Positional Embeddings. With these modifications, I inserted the agent embeddings into the database. These GPUs are interconnected utilizing a mix of NVLink and NVSwitch technologies, making certain environment friendly data transfer within nodes. In the A100 cluster, every node is configured with eight GPUs, interconnected in pairs using NVLink bridges. I don’t get "interconnected in pairs." An SXM A100 node ought to have eight GPUs linked all-to-all over an NVSwitch.

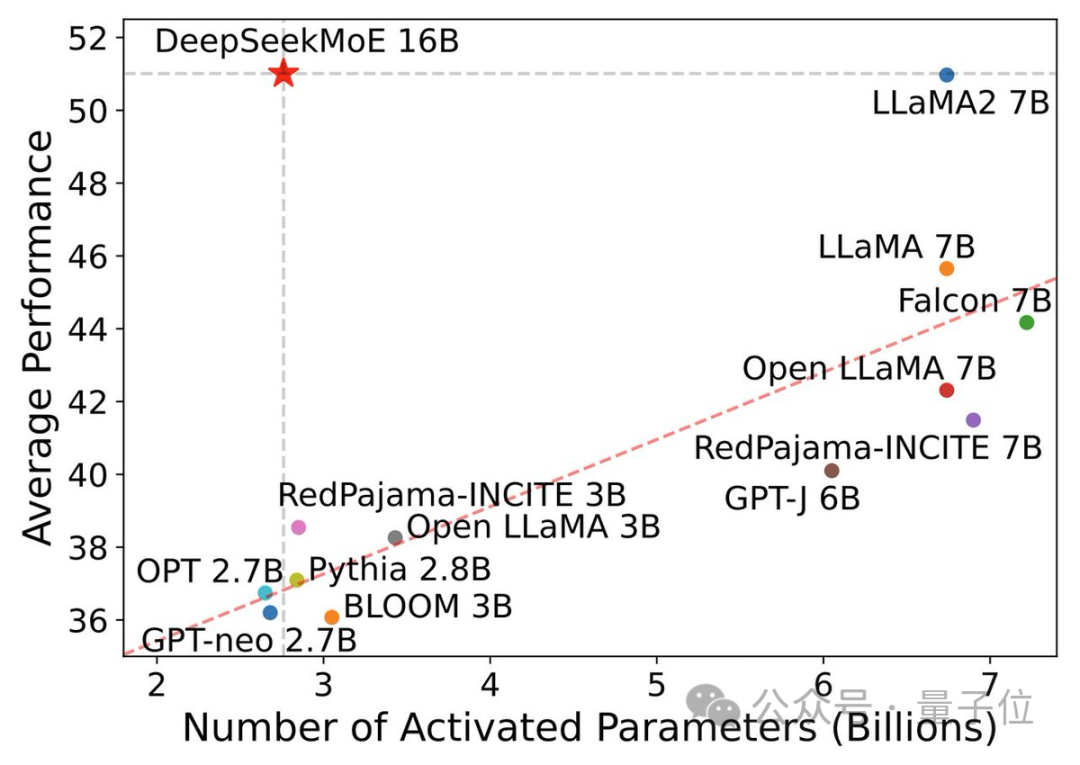

To facilitate seamless communication between nodes in both A100 and H800 clusters, we employ InfiniBand interconnects, recognized for his or her excessive throughput and low latency. You'll be able to directly employ Huggingface's Transformers for model inference. You're able to run the mannequin. To fast begin, you'll be able to run DeepSeek-LLM-7B-Chat with only one single command on your own system. Other libraries that lack this function can only run with a 4K context size. Torch.compile is a major function of PyTorch 2.0. On NVIDIA GPUs, it performs aggressive fusion and generates extremely environment friendly Triton kernels. Because it performs higher than Coder v1 && LLM v1 at NLP / Math benchmarks. Additionally they discover proof of information contamination, as their model (and GPT-4) performs higher on issues from July/August. Despite being worse at coding, they state that DeepSeek-Coder-v1.5 is healthier. Despite being the smallest model with a capability of 1.3 billion parameters, DeepSeek-Coder outperforms its bigger counterparts, StarCoder and CodeLlama, in these benchmarks. At the big scale, we practice a baseline MoE mannequin comprising 228.7B total parameters on 578B tokens.

To facilitate seamless communication between nodes in both A100 and H800 clusters, we employ InfiniBand interconnects, recognized for his or her excessive throughput and low latency. You'll be able to directly employ Huggingface's Transformers for model inference. You're able to run the mannequin. To fast begin, you'll be able to run DeepSeek-LLM-7B-Chat with only one single command on your own system. Other libraries that lack this function can only run with a 4K context size. Torch.compile is a major function of PyTorch 2.0. On NVIDIA GPUs, it performs aggressive fusion and generates extremely environment friendly Triton kernels. Because it performs higher than Coder v1 && LLM v1 at NLP / Math benchmarks. Additionally they discover proof of information contamination, as their model (and GPT-4) performs higher on issues from July/August. Despite being worse at coding, they state that DeepSeek-Coder-v1.5 is healthier. Despite being the smallest model with a capability of 1.3 billion parameters, DeepSeek-Coder outperforms its bigger counterparts, StarCoder and CodeLlama, in these benchmarks. At the big scale, we practice a baseline MoE mannequin comprising 228.7B total parameters on 578B tokens.

The present "best" open-weights models are the Llama 3 collection of fashions and Meta seems to have gone all-in to prepare the very best vanilla Dense transformer. Eight for huge models) on the ShareGPT datasets. DeepSeek unveiled its first set of models - deepseek ai Coder, DeepSeek LLM, and DeepSeek Chat - in November 2023. But it surely wasn’t until final spring, when the startup released its subsequent-gen DeepSeek-V2 household of models, that the AI industry started to take notice. It contain operate calling capabilities, along with general chat and instruction following. "If the objective is functions, following Llama’s structure for fast deployment makes sense. SGLang w/ torch.compile yields as much as a 1.5x speedup in the next benchmark. In SGLang v0.3, we implemented varied optimizations for MLA, including weight absorption, grouped decoding kernels, FP8 batched MatMul, and FP8 KV cache quantization. We enhanced SGLang v0.3 to totally support the 8K context length by leveraging the optimized window consideration kernel from FlashInfer kernels (which skips computation as a substitute of masking) and refining our KV cache supervisor. We're excited to announce the release of SGLang v0.3, which brings vital performance enhancements and expanded support for novel model architectures. Support for Transposed GEMM Operations.

With this unified interface, computation items can easily accomplish operations comparable to read, write, multicast, and reduce throughout the whole IB-NVLink-unified area through submitting communication requests based on simple primitives. Because HumanEval/MBPP is just too easy (mainly no libraries), they also check with DS-1000. I’d guess the latter, since code environments aren’t that simple to setup. Do they actually execute the code, ala Code Interpreter, or simply inform the model to hallucinate an execution? DeepSeek-Coder-Base-v1.5 mannequin, regardless of a slight lower in coding efficiency, shows marked improvements throughout most duties when in comparison with the DeepSeek-Coder-Base model. Other non-openai code fashions on the time sucked in comparison with DeepSeek-Coder on the tested regime (primary issues, library usage, leetcode, infilling, small cross-context, math reasoning), and particularly suck to their fundamental instruct FT. In the identical 12 months, High-Flyer established High-Flyer AI which was dedicated to analysis on AI algorithms and its fundamental purposes. He knew the information wasn’t in some other methods because the journals it got here from hadn’t been consumed into the AI ecosystem - there was no trace of them in any of the training sets he was aware of, and basic data probes on publicly deployed models didn’t appear to point familiarity. While encouraging, there is still much room for improvement.

If you beloved this article and you also would like to receive more info with regards to deepseek ai china kindly visit our own internet site.