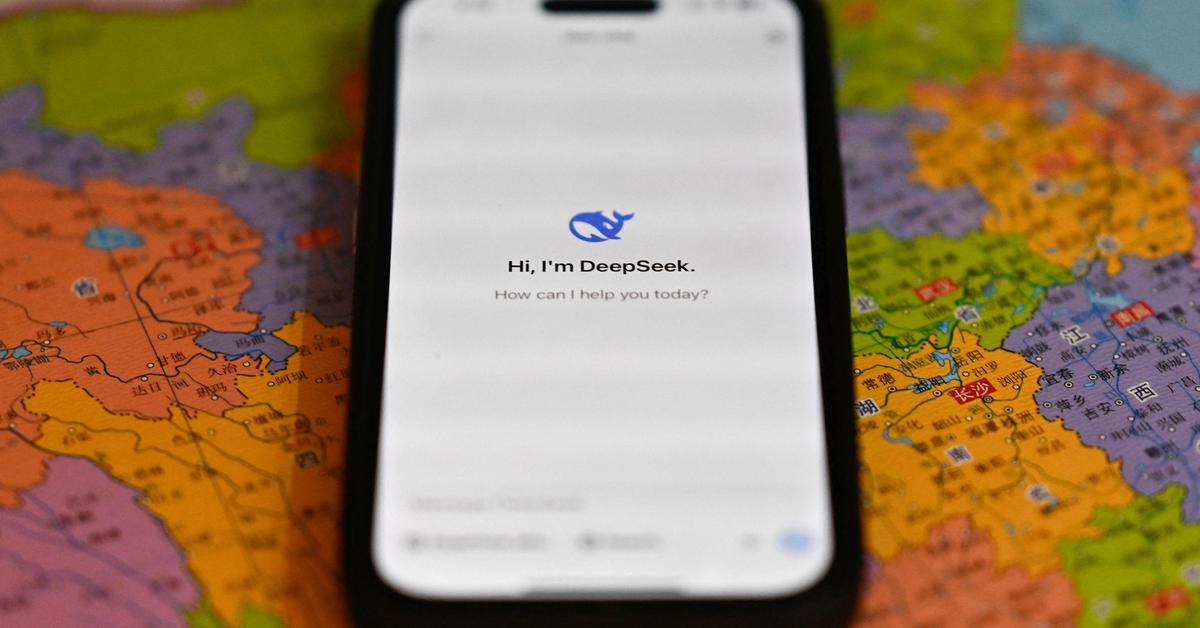

Listen to this story an organization primarily based in China which goals to "unravel the thriller of AGI with curiosity has released DeepSeek LLM, a 67 billion parameter model skilled meticulously from scratch on a dataset consisting of 2 trillion tokens. How it really works: deepseek ai china-R1-lite-preview makes use of a smaller base mannequin than DeepSeek 2.5, which contains 236 billion parameters. On this paper, we introduce DeepSeek-V3, a large MoE language model with 671B complete parameters and 37B activated parameters, skilled on 14.8T tokens. DeepSeek-Coder and DeepSeek-Math were used to generate 20K code-associated and 30K math-associated instruction knowledge, then combined with an instruction dataset of 300M tokens. Instruction tuning: To enhance the performance of the model, they accumulate round 1.5 million instruction data conversations for supervised positive-tuning, "covering a variety of helpfulness and harmlessness topics". An up-and-coming Hangzhou AI lab unveiled a mannequin that implements run-time reasoning similar to OpenAI o1 and delivers aggressive performance. Do they do step-by-step reasoning?

Listen to this story an organization primarily based in China which goals to "unravel the thriller of AGI with curiosity has released DeepSeek LLM, a 67 billion parameter model skilled meticulously from scratch on a dataset consisting of 2 trillion tokens. How it really works: deepseek ai china-R1-lite-preview makes use of a smaller base mannequin than DeepSeek 2.5, which contains 236 billion parameters. On this paper, we introduce DeepSeek-V3, a large MoE language model with 671B complete parameters and 37B activated parameters, skilled on 14.8T tokens. DeepSeek-Coder and DeepSeek-Math were used to generate 20K code-associated and 30K math-associated instruction knowledge, then combined with an instruction dataset of 300M tokens. Instruction tuning: To enhance the performance of the model, they accumulate round 1.5 million instruction data conversations for supervised positive-tuning, "covering a variety of helpfulness and harmlessness topics". An up-and-coming Hangzhou AI lab unveiled a mannequin that implements run-time reasoning similar to OpenAI o1 and delivers aggressive performance. Do they do step-by-step reasoning?

Unlike o1, it displays its reasoning steps. The mannequin significantly excels at coding and reasoning tasks whereas utilizing significantly fewer assets than comparable models. It’s part of an necessary movement, after years of scaling models by elevating parameter counts and amassing larger datasets, towards reaching high efficiency by spending more power on producing output. The additional efficiency comes at the cost of slower and dearer output. Their product permits programmers to extra easily integrate various communication methods into their software program and applications. For DeepSeek-V3, the communication overhead launched by cross-node professional parallelism leads to an inefficient computation-to-communication ratio of roughly 1:1. To tackle this problem, we design an progressive pipeline parallelism algorithm called DualPipe, which not only accelerates mannequin coaching by effectively overlapping forward and backward computation-communication phases, but also reduces the pipeline bubbles. Inspired by recent advances in low-precision coaching (Peng et al., 2023b; Dettmers et al., 2022; Noune et al., 2022), we propose a advantageous-grained mixed precision framework using the FP8 knowledge format for training DeepSeek-V3. As illustrated in Figure 6, the Wgrad operation is performed in FP8. How it works: "AutoRT leverages vision-language fashions (VLMs) for scene understanding and grounding, and additional uses giant language models (LLMs) for proposing diverse and novel directions to be performed by a fleet of robots," the authors write.

The models are roughly based mostly on Facebook’s LLaMa family of models, although they’ve changed the cosine learning fee scheduler with a multi-step studying rate scheduler. Across totally different nodes, InfiniBand (IB) interconnects are utilized to facilitate communications. Another notable achievement of the DeepSeek LLM household is the LLM 7B Chat and 67B Chat fashions, that are specialised for conversational tasks. We ran a number of large language fashions(LLM) locally so as to determine which one is the very best at Rust programming. Mistral fashions are currently made with Transformers. Damp %: A GPTQ parameter that affects how samples are processed for quantisation. 7B parameter) variations of their models. Google researchers have built AutoRT, a system that uses giant-scale generative fashions "to scale up the deployment of operational robots in utterly unseen scenarios with minimal human supervision. For Budget Constraints: If you're restricted by price range, deal with Deepseek GGML/GGUF models that fit inside the sytem RAM. Suppose your have Ryzen 5 5600X processor and DDR4-3200 RAM with theoretical max bandwidth of fifty GBps. How a lot RAM do we want? In the present process, ديب سيك we have to read 128 BF16 activation values (the output of the previous computation) from HBM (High Bandwidth Memory) for quantization, and the quantized FP8 values are then written back to HBM, only to be read again for MMA.

If you have any sort of concerns regarding where and exactly how to use ديب سيك, you could contact us at our internet site.